The tech industry is abuzz as the highly anticipated apple ai smart glasses gesture control system is finally coming into focus in 2026. Following the massive pivot away from the heavy, expensive Vision Pro headset, Apple is developing a sleek, lightweight set of AI smart glasses designed to directly rival products like the Meta Ray-Bans. Rather than relying on bulky mixed-reality goggles, Apple is embracing ambient computing. By utilizing advanced gesture tracking and a dramatically upgraded Siri assistant, Apple is preparing to redefine how we interact with technology on the go, completely removing the need for a traditional display.

This strategic shift represents a massive leap toward mainstream wearable adoption. By prioritizing comfort, aesthetic appeal, and intuitive input methods, Apple is solving the fundamental wearability crisis that plagued its previous spatial computing efforts.

The Vision Pro Successor is Screenless: Dual Cameras and Gestures

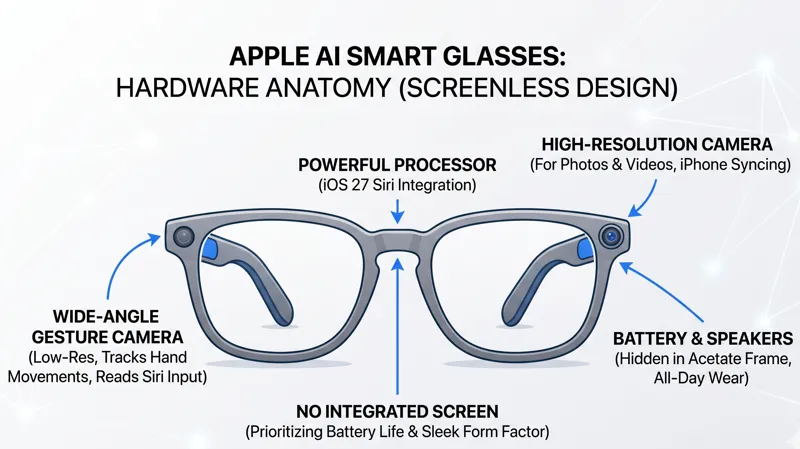

According to insider leaks, the most fascinating aspect of Apple’s upcoming wearable is its complete lack of an integrated screen. Instead of forcing users to look at digital overlays, the device relies on a sophisticated dual-camera array. The primary camera is a high-resolution sensor dedicated to capturing stunning photos and recording videos from a first-person perspective, seamlessly syncing with your iPhone for easy social media sharing.

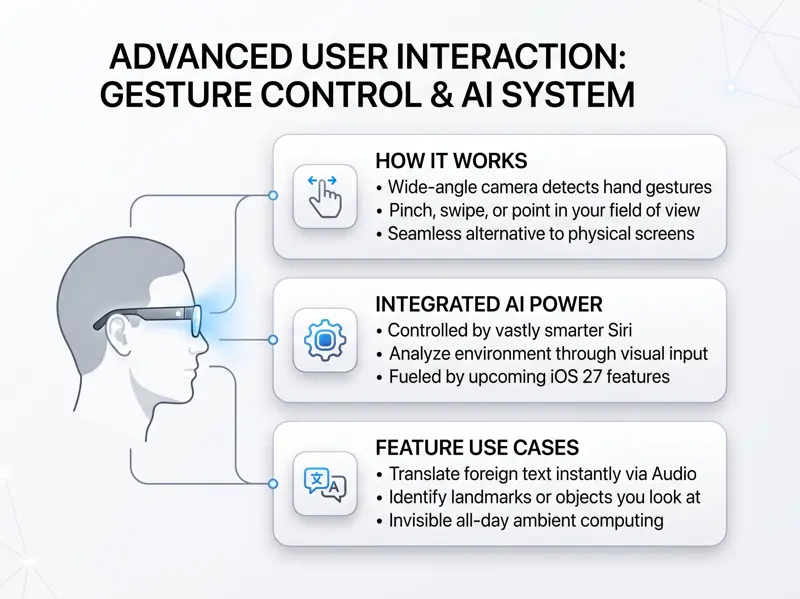

The magic, however, lies in the secondary camera. A lower-resolution, wide-angle lens is positioned specifically to read hand gestures and provide continuous visual input for Siri. Because there is no graphical user interface to tap or swipe, physical hand movements in your field of view will serve as the primary navigational tool. Apple has already pioneered robust hand-tracking algorithms with the Vision Pro, and shrinking this technology down to fit into standard eyewear is a monumental engineering feat.

“By removing the screen entirely, Apple is betting that invisible AI and intuitive hand gestures are the true future of seamless, all-day ambient computing.”

| Feature Set | Apple AI Smart Glasses | Meta Ray-Ban Glasses |

|---|---|---|

| Primary Input Method | Siri Voice & Hand Gestures | Meta AI Voice & Touchpad |

| Camera Setup | Dual (High-Res Media + Gesture Tracker) | Single (Media & AI Vision) |

| Integrated Display | None (Audio/Gesture focused) | None (Audio focused) |

Battery Limits and Future Potential

While tech enthusiasts might be disappointed by the lack of augmented reality (AR) holograms, this stripped-down approach is a direct result of strict battery life constraints. Apple’s design philosophy mandates that these glasses must be slim, lightweight, and indistinguishable from traditional designer eyewear. Integrating power-hungry components like micro-OLED displays, LiDAR scanners, or heavy 3D depth cameras would require bulky batteries, ruining the aesthetics and comfort.

To achieve this lightweight profile, rumors suggest Apple is testing multiple frame styles utilizing acetate. Acetate is a premium, plant-based material that is highly flexible, durable, and significantly lighter than traditional plastics, making it ideal for housing delicate electronics without burdening the wearer’s face.

“Battery physics dictate wearable design; dropping AR displays allows Apple to deliver an all-day battery life in a frame that users actually want to wear in public.”

For those tracking the long-term roadmap of Apple’s spatial computing hardware, you can follow ongoing hardware leaks via the MacRumors Apple News Hub to see how this technology evolves.

| Hardware Component | Design Decision | Reasoning |

|---|---|---|

| Frame Material | Plant-based Acetate | Lightweight, flexible, and aesthetic appeal |

| LiDAR Scanner | Excluded | Requires excessive battery power and space |

| Visual Displays | Excluded | Too energy-intensive for a slim form factor |

iOS 27 and the Launch Timeline

The true brain of these smart glasses will not live entirely on the frame, but rather on your iPhone. The glasses are designed to seamlessly integrate with a vastly smarter version of Siri, which Apple plans to introduce in iOS 27. Users will be able to look at a foreign menu, a historical landmark, or a complex math problem, and simply ask Siri to translate, identify, or solve what the wide-angle camera is currently seeing.

Industry analysts expect Apple to officially preview the device late this year, acting as a developer tease, with a full consumer launch slated for 2027. This timeline gives Apple ample opportunity to refine the gesture recognition algorithms and polish the iOS 27 Siri experience.

Frequently Asked Questions

Will the Apple AI smart glasses have a screen?

No, the first generation of Apple’s smart glasses will not feature an integrated display, holograms, or AR overlays in order to save battery life.

How do you control the screenless Apple glasses?

You will control the glasses using voice commands via Siri and physical hand gestures, which are tracked by a dedicated low-resolution, wide-angle camera.

Can these glasses take photos and videos?

Yes, the glasses will include a separate high-resolution camera specifically designed to capture first-person photos and videos.

Why did Apple choose not to include LiDAR or 3D cameras?

Features like LiDAR and 3D depth mapping are incredibly energy-intensive. Removing them allows Apple to keep the battery small and the glasses lightweight.

What material will the frames be made from?

Rumors indicate Apple is testing frames made from acetate, a lightweight, plant-based material that is more flexible and comfortable than standard plastic.

Who are the main competitors for this new device?

Apple is positioning these smart glasses to compete directly with the highly successful Ray-Ban Meta smart glasses.

When will the Apple AI smart glasses be released?

Apple is expected to preview the hardware late this year, with an official retail launch heavily rumored for 2027 alongside iOS 27.

Disclaimer: This article is for informational purposes only. The features, materials, and launch timelines discussed are based on industry leaks and rumors, and are subject to change by Apple prior to the official release.