The highly anticipated iOS 27 Siri Chatbot is officially shaping up to be the most significant software revolution in the history of the iPhone. For years, Apple users have relied on Siri for basic tasks like setting timers, checking the weather, or placing quick phone calls. However, as the artificial intelligence landscape rapidly evolved, the traditional voice assistant began to feel painfully outdated compared to advanced conversational models. In 2026, Apple is finally ready to strike back. We are less than three months away from the Worldwide Developers Conference (WWDC), and leaks indicate that iOS 27, iPadOS 27, and macOS 27 will introduce a fully reimagined, standalone Siri experience that functions exactly like ChatGPT, Claude, and Gemini.

This is not just a minor under-the-hood tweak; it is a fundamental paradigm shift in how users will interact with their Apple devices. By transforming Siri from a simple voice-command utility into a deeply integrated, context-aware chatbot, Apple is aggressively reclaiming its position in the tech ecosystem. Let us dive deep into the five massive upgrades that will redefine your daily smartphone experience.

1. The Google Gemini Apple Partnership and Custom Architecture

Perhaps the most shocking revelation regarding the new iOS 27 Siri app is the underlying engine powering its immense capabilities. Developing a world-class large language model (LLM) from scratch requires years of iteration and massive server infrastructure. To accelerate this process, Apple has solidified a groundbreaking collaboration. The Google Gemini Apple partnership will serve as the backbone for the next generation of Apple Foundation Models.

According to industry insiders, the specific AI model driving the new Siri is a custom variant developed directly by the Google Gemini team. This custom architecture is reportedly comparable in power and nuance to the formidable Gemini 3. Because handling billions of daily queries from active iPhone users requires unparalleled computational power, Apple and Google are actively testing cloud infrastructure utilizing Google’s advanced Tensor Processing Units (TPUs). This hybrid approach ensures that while on-device processing handles sensitive personal data, the heavy lifting for complex queries is seamlessly offloaded to the cloud.

“The integration of Google’s custom Gemini architecture into Apple’s ecosystem represents a monumental shift in tech alliances, bringing unprecedented generative power directly to the iPhone.”

2. The Introduction of a Standalone iOS 27 Siri App

Since its inception, Siri has existed as a transient overlay—a voice that appears, executes a command, and vanishes. With iOS 27, Apple is introducing a dedicated, standalone application for its assistant. This application will heavily resemble the interfaces of popular platforms like OpenAI’s ChatGPT, fundamentally changing user interaction.

The standalone application will allow users to engage in continuous, multi-turn conversations. Instead of having to phrase a perfect, single-breath command, users can now chat with Siri using natural text or voice. The interface will feature familiar iMessage-style chat bubbles, a searchable history of past conversations, and the ability to pin or favorite specific chats for quick reference. When you open the application, you will be greeted with intelligent, context-aware prompts suggesting tasks based on your current routine, location, and device usage.

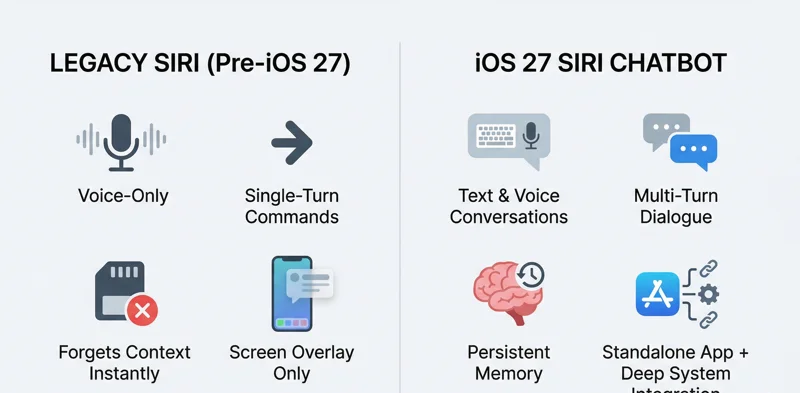

| Feature | Classic Siri (Pre-iOS 27) | iOS 27 Siri Chatbot |

|---|---|---|

| Interaction Mode | Primarily Voice, Single-turn | Text & Voice, Multi-turn conversational |

| Memory & History | Forgets context instantly | Saves past chats, remembers user context |

| Interface Type | Screen overlay only | Dedicated app + System overlay |

3. Deep System Integration and the Dynamic Island Siri Redesign

While the standalone app provides a centralized hub for complex queries, Siri’s true power lies in its deep system-level integration. Apple is not just building a chatbot; they are weaving it into the very fabric of the operating system. The most visually striking change will be the Dynamic Island Siri redesign. When activated via the traditional wake word or the side button, the Dynamic Island will illuminate with a glowing, fluid animation indicating that Siri is listening and processing.

Once a request is processed, the Dynamic Island will expand into a larger, translucent panel to display visually rich results without ripping you away from your current app. Furthermore, this new deep integration means Siri will officially replace the traditional Spotlight search functionality. By analyzing open windows and on-screen content, Siri can take direct action. If you are reading an article, you can simply ask Siri to summarize it. If you are viewing an email, you can ask Siri to extract the attached file and generate a polite reply.

“By replacing traditional Spotlight search and embedding contextual awareness into the Dynamic Island, Apple is ensuring that AI becomes an invisible, frictionless extension of the user’s thought process.”

Unleashing Apple Intelligence Features Across Core Apps

The highly anticipated Apple Intelligence features, some of which were delayed from previous software iterations, will finally reach their full potential. Siri will have unprecedented access to interact with Apple’s core applications. You will be able to instruct Siri to find a specific photo of your dog from three years ago, apply a specific edit to it, and attach it to a newly drafted email—all through a single conversational prompt.

| Core Application | New iOS 27 Siri Capabilities |

|---|---|

| Photos | Search by complex visual descriptions, auto-edit, and generate albums. |

| Mail & Messages | Ingest full threads, summarize conversations, and draft context-aware replies. |

| Xcode & Developer Tools | Analyze code snippets, debug errors, and suggest programming optimizations. |

| Apple TV | Recommend shows based on highly specific moods, genres, or actor combinations. |

4. Third-Party Extensions and Unprecedented User Choice

In a surprising move away from its traditionally closed ecosystem, Apple will allow deep third-party AI chatbot integrations within iOS 27. Recognizing that different models excel at different tasks, Apple is introducing an “Extensions” option within the Apple Intelligence section of the Settings app. If a user prefers the coding proficiency of Claude or the specific analytical style of OpenAI, they can seamlessly route their queries through Siri to these third-party services.

For example, if you have the Claude app installed on your iPhone, you can prompt Siri with a complex logic question and append “ask Claude.” Siri will instantly hand the query off to the third-party model and return the result within the native Apple UI. This flexibility ensures that iPhone users are never locked into a single AI ecosystem, making the device the ultimate universal AI communicator. Developers can find out more about how these integrations will function by reviewing the documentation on the official Apple Developer site.

“The introduction of third-party AI extensions is a masterclass in platform strategy, allowing Apple to own the user interface while leveraging the best specialized models the tech industry has to offer.”

5. Retained Memory and Personal Context

The biggest frustration with legacy voice assistants is their total lack of memory. The iOS 27 update will introduce persistent contextual memory to the assistant. Just like Claude or Gemini, the new Siri will remember past interactions, preferences, and personal details shared across conversations. It will leverage your calendar, emails, and daily routines to answer queries with a high degree of personalization.

Apple is currently fine-tuning the exact boundaries of this memory to maintain their strict privacy standards. The goal is to ensure that the AI feels incredibly personal and intuitive without compromising the secure enclave of user data that Apple has built its reputation upon. By combining localized on-device processing for sensitive data with secure cloud processing for heavy generation, Apple aims to deliver the safest personalized chatbot experience on the market.

| Memory & Context Types | How Siri Uses It in iOS 27 |

|---|---|

| Conversational Memory | Remembers the topic of previous prompts to allow for natural follow-up questions. |

| Semantic Device Context | Reads what is currently displayed on your screen to take immediate action on text or images. |

| Personal Data Graph | Cross-references your contacts, calendar, and location to provide highly specific answers. |

Launch Date Expectations and WWDC AI Announcements

The tech world is eagerly counting down the days. Apple is expected to officially unveil the iOS 27 Siri chatbot, alongside iPadOS 27 and macOS 27, during the highly anticipated WWDC AI announcements. The conference is scheduled to kick off on Monday, June 8th. While developers will get their hands on the beta immediately following the keynote, the general public can expect the final, polished release to roll out in the fall, coinciding with the launch of the next-generation iPhone hardware.

Frequently Asked Questions

What exactly is the iOS 27 Siri Chatbot?

It is a complete overhaul of Apple’s voice assistant, transforming it into a dedicated app and system-wide feature that functions like advanced large language models (such as ChatGPT), capable of text generation, complex reasoning, and multi-turn conversations.

Will Siri still respond to voice commands?

Yes, Siri will still respond to traditional voice wake words and button presses, but it will now also support full text-based conversations via its new standalone application.

How is Google involved in the new Siri update?

Apple has partnered with Google to use a custom version of the Gemini AI model, along with Google’s cloud infrastructure (TPUs), to power the advanced generative capabilities of the new Siri.

What changes are coming to the Dynamic Island?

The Dynamic Island will feature a new glowing UI animation when Siri is processing, expanding into a translucent panel to display rich results without forcing the user to leave their current app.

Can I use other AI models instead of Siri?

Yes, iOS 27 will introduce third-party extensions, allowing users to seamlessly route their Siri queries to other installed chatbot apps like Claude, ChatGPT, or the standard Google Gemini app.

Does the new Siri replace Spotlight search?

Yes, the deep system integration of the new AI assistant will completely replace the traditional Spotlight search functionality, offering much richer and more actionable on-device search results.

When will the iOS 27 Siri update be available?

Apple is expected to announce the feature at WWDC on June 8th, with a developer beta following immediately, and a public release slated for the fall of 2026.

Disclaimer: This article is for informational purposes only. The features, partnerships, and release timelines discussed are based on industry leaks and rumors regarding iOS 27 and are subject to change by Apple before the official software launch.